File size: 2,802 Bytes

221646f 31dd763 221646f 7fd2027 611475e f978bbf |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 |

---

license: apache-2.0

tags:

- merge

- mergekit

- lazymergekit

- AlekseiPravdin/KSI-RP-NSK-128k-7B

- flammenai/flammen18X-mistral-7B

- gguf

- Q2_K

- Q3_K_L

- Q3_K_M

- Q3_K_S

- Q4_0

- Q4_1

- Q4_K_S

- Q4_k_m

- Q5_0

- Q5_1

- Q6_K

- Q5_K_S

- Q5_k_m

- Q8_0

- 128k

language:

- en

- ru

- th

---

# KSI-RPG-128k-7B-GGUF ⭐️⭐️⭐️

KSI-RPG-128k-7B is a merge of the following models using [mergekit](https://github.com/cg123/mergekit):

* [AlekseiPravdin/KSI-RP-NSK-128k-7B](https://huggingface.co/AlekseiPravdin/KSI-RP-NSK-128k-7B)

* [flammenai/flammen18X-mistral-7B](https://huggingface.co/flammenai/flammen18X-mistral-7B)

## 🧩 Configuration

```yaml

slices:

- sources:

- model: AlekseiPravdin/KSI-RP-NSK-128k-7B

layer_range: [0, 32]

- model: flammenai/flammen18X-mistral-7B

layer_range: [0, 32]

merge_method: slerp

base_model: AlekseiPravdin/KSI-RP-NSK-128k-7B

parameters:

t:

- filter: self_attn

value: [0, 0.5, 0.3, 0.7, 1]

- filter: mlp

value: [1, 0.5, 0.7, 0.3, 0]

- value: 0.5

dtype: bfloat16

```

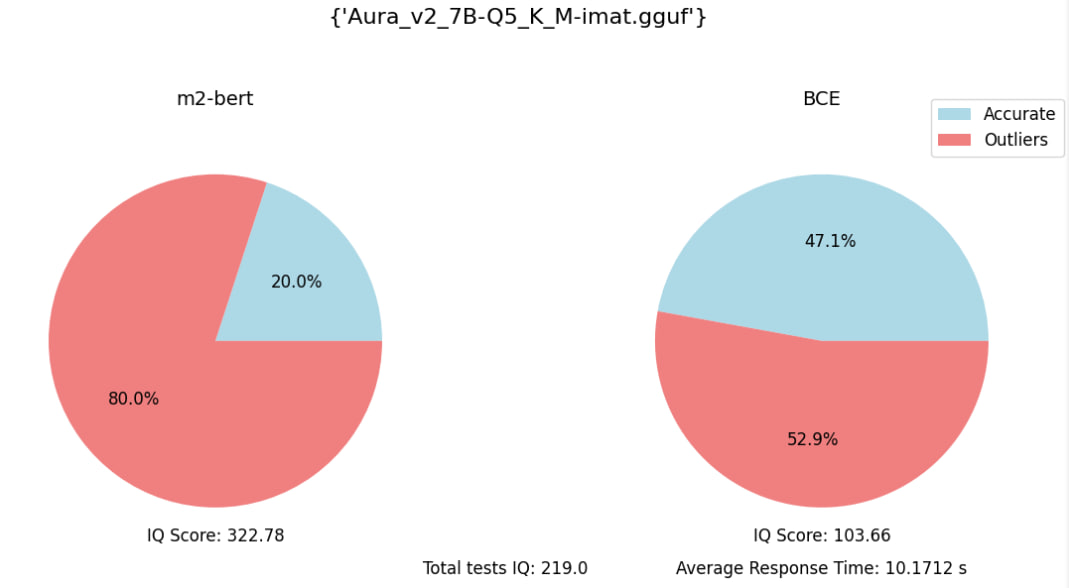

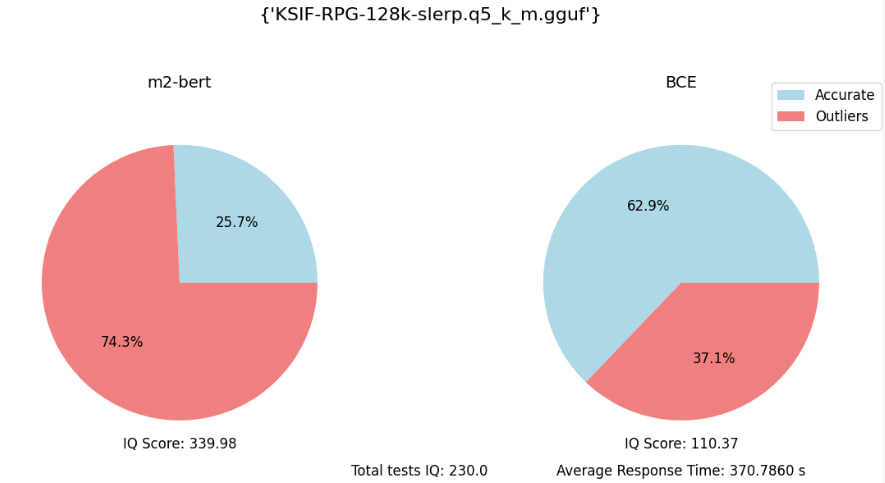

Eval embedding benchmark (with 70 specific quesions):

|