---

license: apache-2.0

---

# Depth Anything Core ML Models

See [the Files tab](https://huggingface.co/coreml-projects/depth-anything/tree/main) for converted models.

Depth Anything model was introduced in the paper [Depth Anything: Unleashing the Power of Large-Scale Unlabeled Data](https://arxiv.org/abs/2401.10891) by Lihe Yang et al. and first released in [this repository](https://github.com/LiheYoung/Depth-Anything).

[Online demo](https://huggingface.co/spaces/LiheYoung/Depth-Anything) is also provided.

Disclaimer: The team releasing Depth Anything did not write a model card for this model so this model card has been written by the Hugging Face team.

## Model description

Depth Anything leverages the [DPT](https://huggingface.co/docs/transformers/model_doc/dpt) architecture with a [DINOv2](https://huggingface.co/docs/transformers/model_doc/dinov2) backbone.

The model is trained on ~62 million images, obtaining state-of-the-art results for both relative and absolute depth estimation.

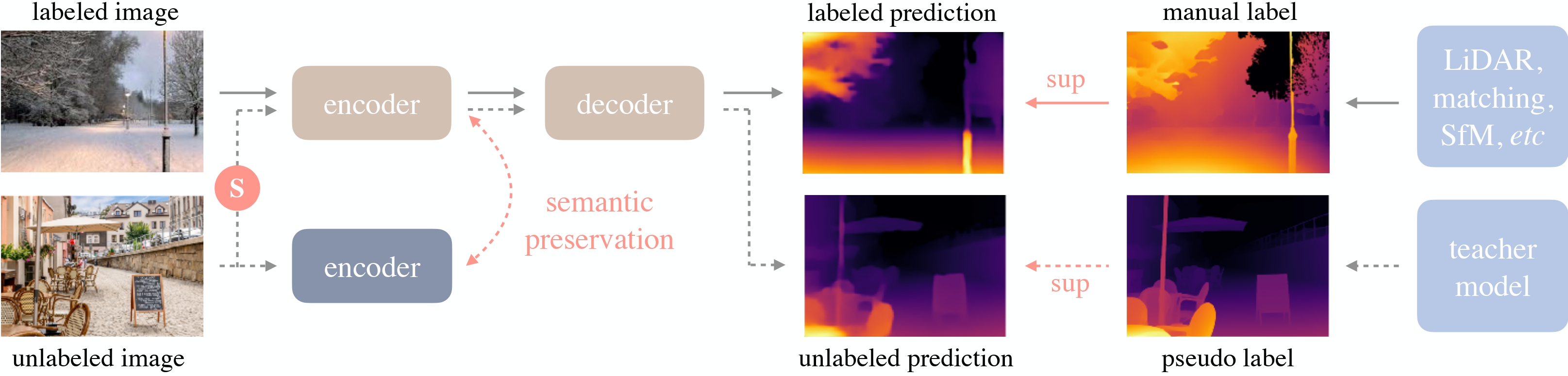

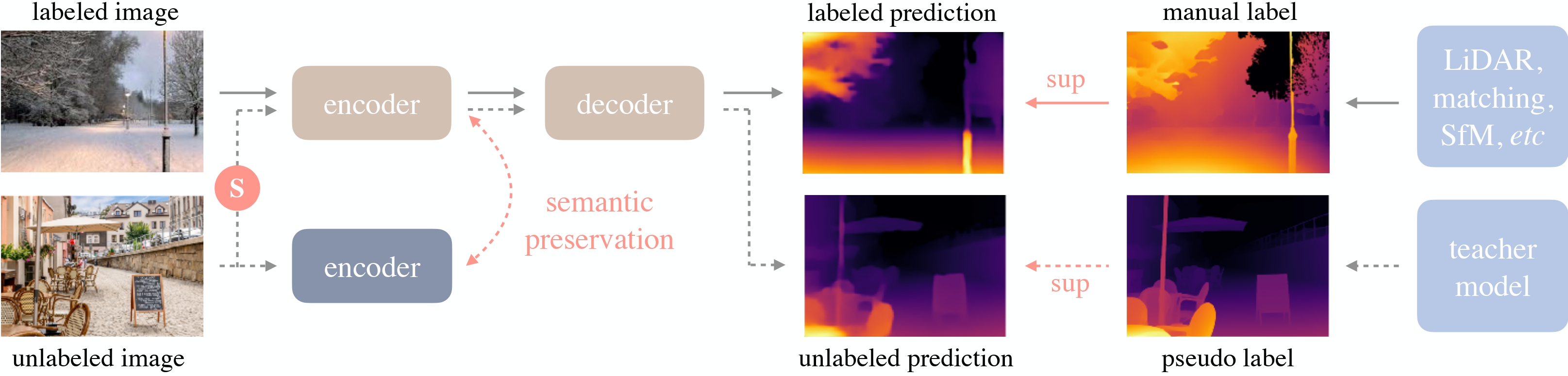

Depth Anything overview. Taken from the original paper.

## Download

Install `huggingface-hub`

```bash

pip install huggingface-hub

```

To download one of the `.mlpackage` folders to the `models` directory:

```bash

huggingface-cli download \

--local-dir models --local-dir-use-symlinks False \

coreml-projects/depth-anything \

--include "DepthAnythingSmallF16.mlpackage/*"

```

To download everything, skip the `--include` argument.

Depth Anything overview. Taken from the original paper.

## Download

Install `huggingface-hub`

```bash

pip install huggingface-hub

```

To download one of the `.mlpackage` folders to the `models` directory:

```bash

huggingface-cli download \

--local-dir models --local-dir-use-symlinks False \

coreml-projects/depth-anything \

--include "DepthAnythingSmallF16.mlpackage/*"

```

To download everything, skip the `--include` argument.

Depth Anything overview. Taken from the original paper.

## Download

Install `huggingface-hub`

```bash

pip install huggingface-hub

```

To download one of the `.mlpackage` folders to the `models` directory:

```bash

huggingface-cli download \

--local-dir models --local-dir-use-symlinks False \

coreml-projects/depth-anything \

--include "DepthAnythingSmallF16.mlpackage/*"

```

To download everything, skip the `--include` argument.

Depth Anything overview. Taken from the original paper.

## Download

Install `huggingface-hub`

```bash

pip install huggingface-hub

```

To download one of the `.mlpackage` folders to the `models` directory:

```bash

huggingface-cli download \

--local-dir models --local-dir-use-symlinks False \

coreml-projects/depth-anything \

--include "DepthAnythingSmallF16.mlpackage/*"

```

To download everything, skip the `--include` argument.