---

base_model:

- meta-llama/Llama-3.1-8B-Instruct

- google/siglip-so400m-patch14-384

datasets:

- starriver030515/FUSION-Pretrain-10M

- starriver030515/FUSION-Finetune-12M

license: apache-2.0

pipeline_tag: image-text-to-text

library_name: transformers

---

# Model Card for FUSION

This is the checkpoint after Stage 1, Stage1.5 and Stage2 training of FUSION-LLaMA3.1-8B.

This repository contains the model described in the paper [FUSION: Fully Integration of Vision-Language Representations for Deep Cross-Modal Understanding](https://huggingface.co/papers/2504.09925).

## Model Details

**Model Description**

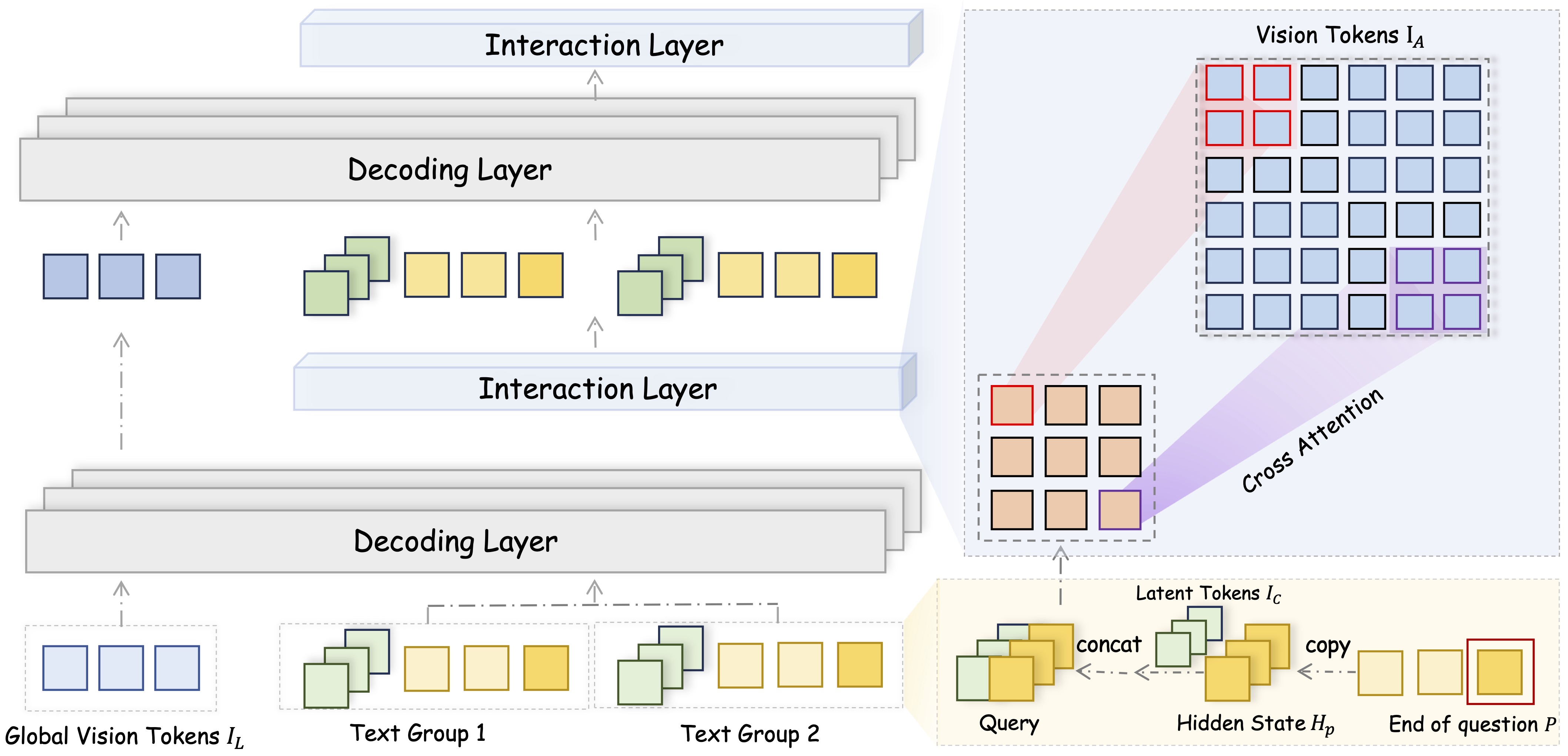

FUSION is a family of multimodal large language models that adopts a fully integrated vision-language architecture, enabling comprehensive and fine-grained cross-modal understanding. In contrast to prior approaches that primarily perform shallow or late-stage modality fusion during the LLM decoding phase, FUSION achieves deep, dynamic integration across the entire vision-language processing pipeline.

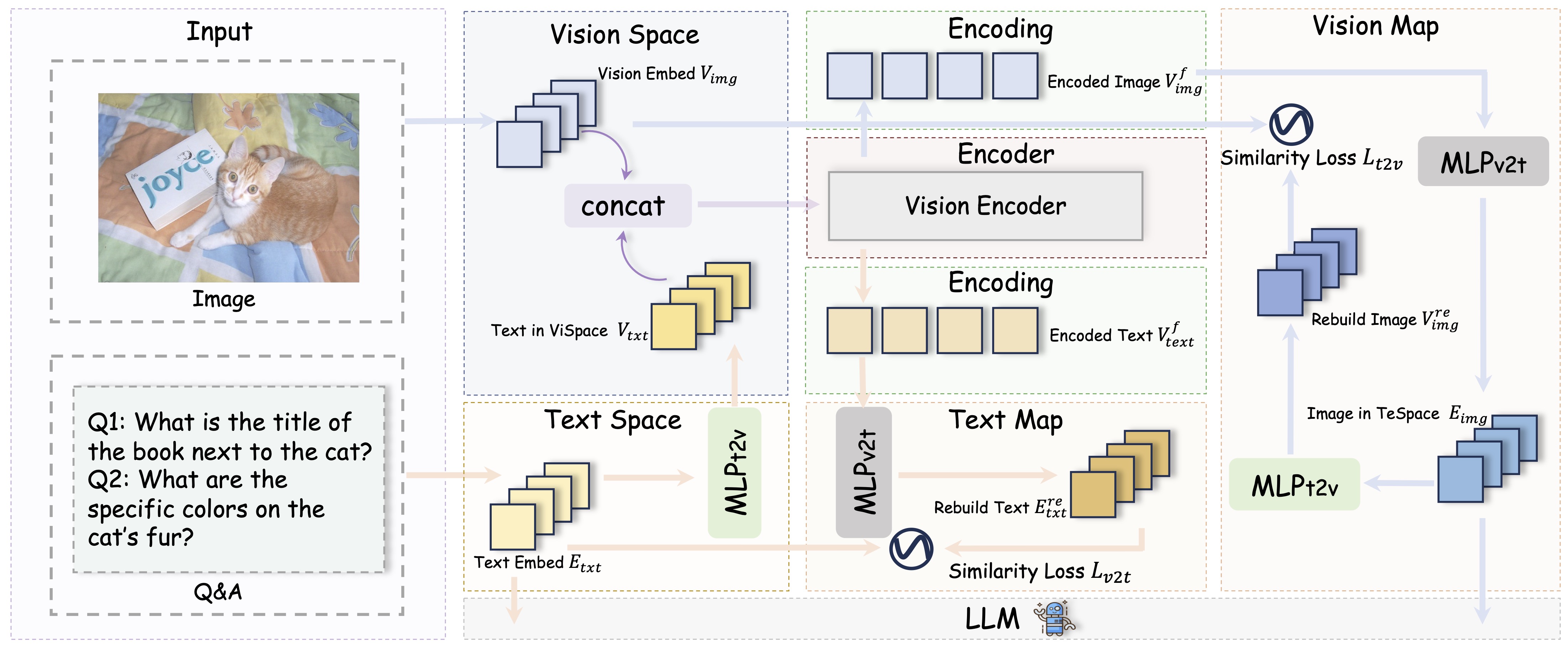

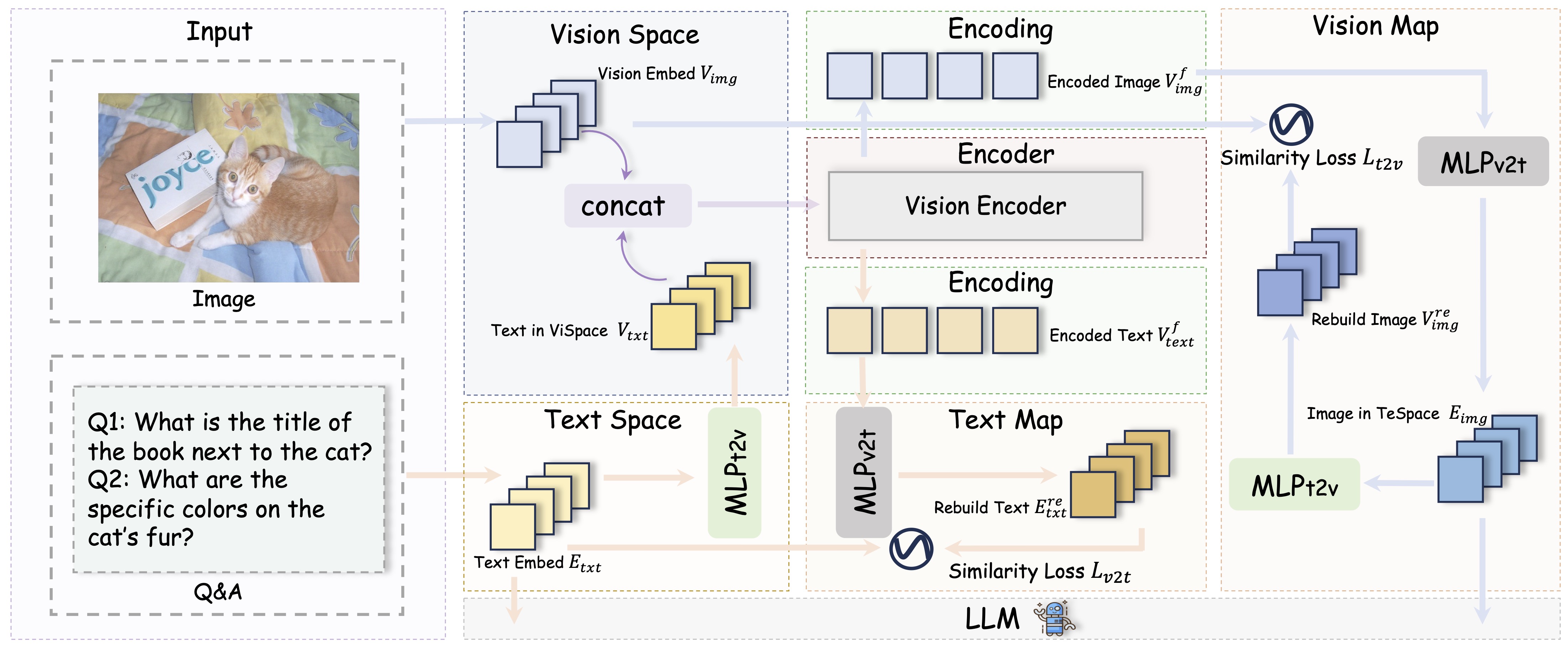

To enable this, FUSION utilizes Text-Guided Unified Vision Encoding, which incorporates textual context directly into the vision encoder. This design allows for pixel-level vision-language alignment and facilitates early-stage cross-modal interaction.

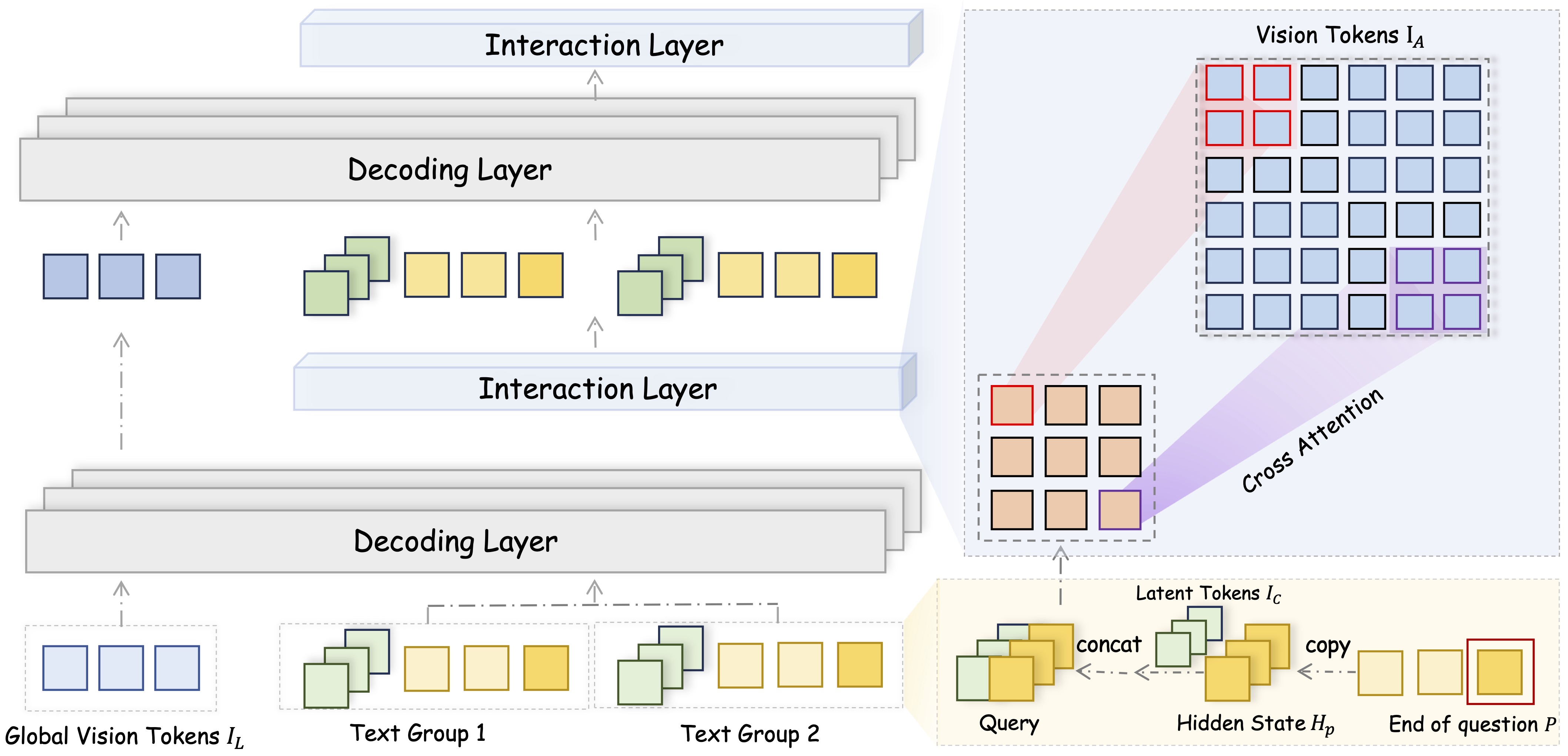

During decoding, FUSION employs Context-Aware Recursive Alignment Decoding strategy. This component dynamically aggregates and refines visual features based on the evolving textual context at each decoding step, allowing the model to capture question-level semantics with high precision.

To further enhance alignment and reduce the semantic gap between modalities, FUSION integrates Dual-Supervised Semantic Mapping Loss, which provides simultaneous supervision in both visual and textual embedding spaces. This dual-path guidance strengthens the consistency and semantic coherence of the fused representations.

**Base Model**

**LLM**: [meta-llama/Llama-3.1-8B-Instruct](https://huggingface.co/meta-llama/Llama-3.1-8B-Instruct)

**Vision Encoder**: [google/siglip-so400m-patch14-384](https://huggingface.co/google/siglip-so400m-patch14-384)

## Training Details

**Training Strategies**

FUSION is trained with a three-stage training framework, ensuring comprehensive alignment and integration between visual and linguistic modalities.

- **Stage1: Foundational Semantic Alignment**: We pretrain the vision encoder using extensive image-caption datasets to establish precise semantic alignment be- tween visual and textual representations.

- **Stage1.5: Contextual Multimodal Fusion**: In contrast to Stage 1, this intermediate stage incorporates various types of QA data along with image-caption pairs. This phase is designed to enhance the model’s adaptability in aligning vision and language representations across a broad spectrum of scenarios.

- **Stage2: Visual Instruction Tuning**: At this stage, we expose the model to various visual tasks, enabling it to answer downstream vision-related questions effectively.

**Training Data**

- [10M FUSION Alignment Data](https://huggingface.co/datasets/starriver030515/FUSION-Pretrain-10M) For Stage1

- [12M FUSION Curated Instruction Tuning Data](https://huggingface.co/datasets/starriver030515/FUSION-Finetune-12M) For Stage1.5 and Stage2

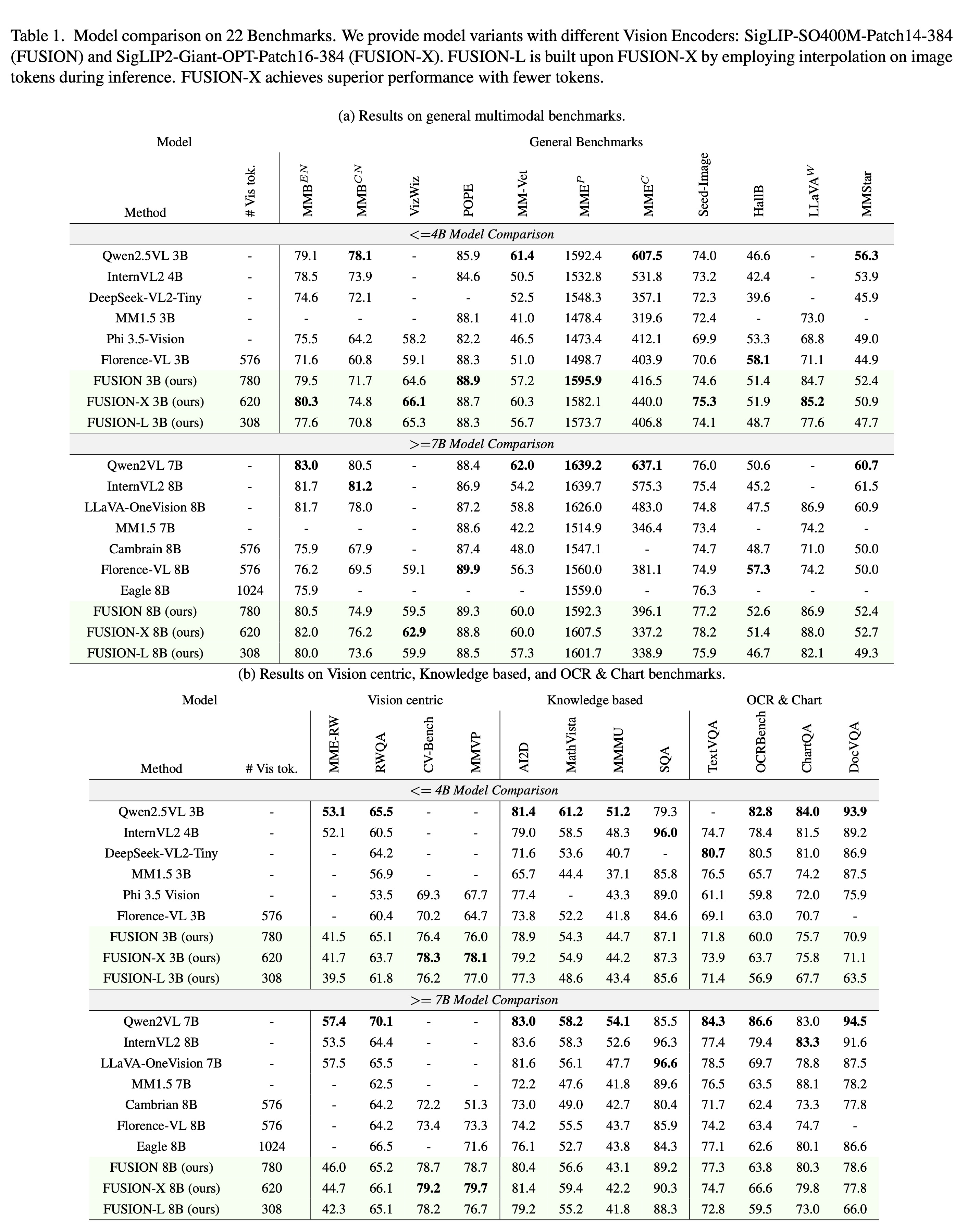

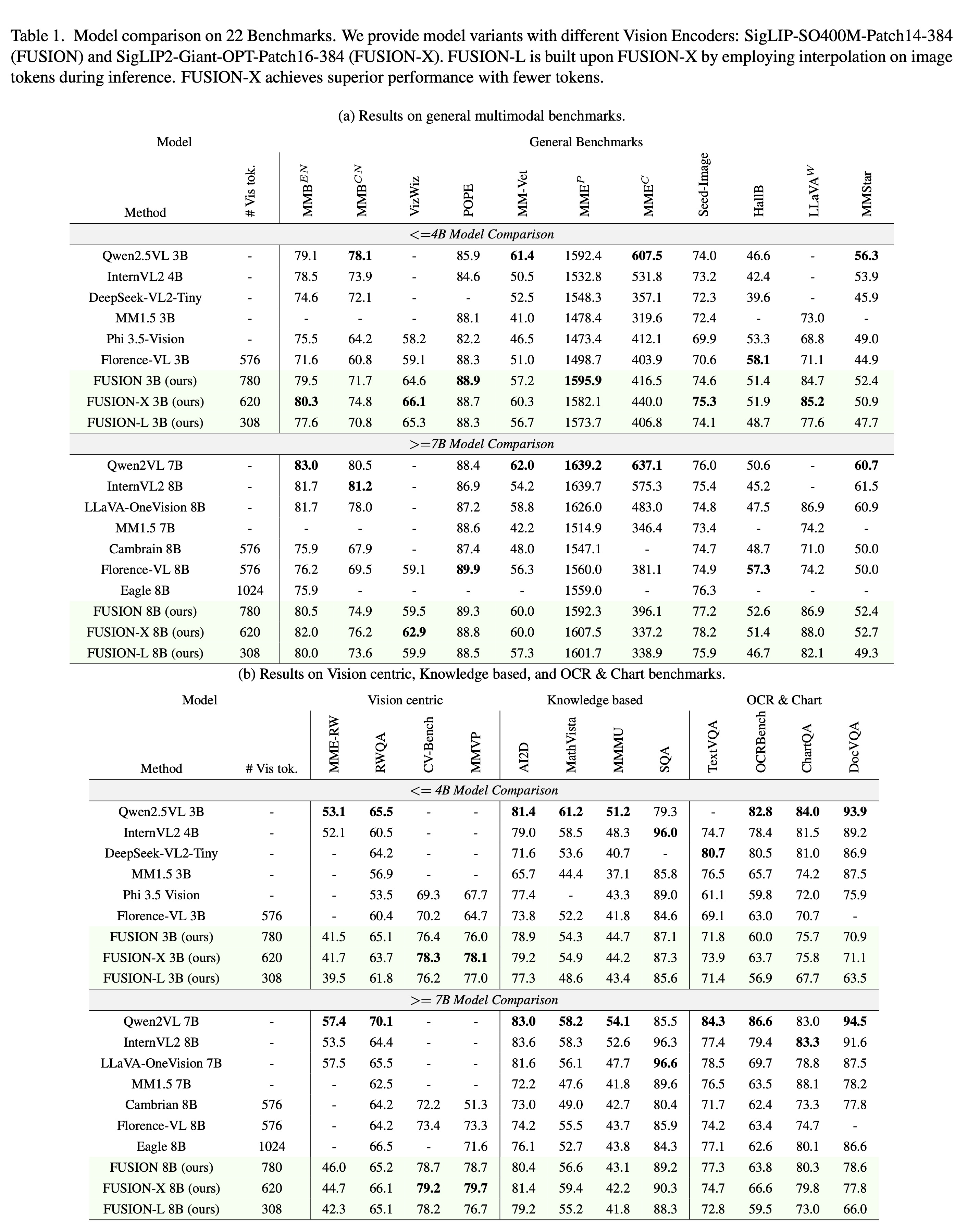

## Performance

FUSION is a family of multimodal large language models that adopts a fully integrated vision-language architecture, enabling comprehensive and fine-grained cross-modal understanding. In contrast to prior approaches that primarily perform shallow or late-stage modality fusion during the LLM decoding phase, FUSION achieves deep, dynamic integration across the entire vision-language processing pipeline.

To enable this, FUSION utilizes Text-Guided Unified Vision Encoding, which incorporates textual context directly into the vision encoder. This design allows for pixel-level vision-language alignment and facilitates early-stage cross-modal interaction.

During decoding, FUSION employs Context-Aware Recursive Alignment Decoding strategy. This component dynamically aggregates and refines visual features based on the evolving textual context at each decoding step, allowing the model to capture question-level semantics with high precision.

To further enhance alignment and reduce the semantic gap between modalities, FUSION integrates Dual-Supervised Semantic Mapping Loss, which provides simultaneous supervision in both visual and textual embedding spaces. This dual-path guidance strengthens the consistency and semantic coherence of the fused representations.

**Base Model**

**LLM**: [meta-llama/Llama-3.1-8B-Instruct](https://huggingface.co/meta-llama/Llama-3.1-8B-Instruct)

**Vision Encoder**: [google/siglip-so400m-patch14-384](https://huggingface.co/google/siglip-so400m-patch14-384)

## Training Details

**Training Strategies**

FUSION is trained with a three-stage training framework, ensuring comprehensive alignment and integration between visual and linguistic modalities.

- **Stage1: Foundational Semantic Alignment**: We pretrain the vision encoder using extensive image-caption datasets to establish precise semantic alignment be- tween visual and textual representations.

- **Stage1.5: Contextual Multimodal Fusion**: In contrast to Stage 1, this intermediate stage incorporates various types of QA data along with image-caption pairs. This phase is designed to enhance the model’s adaptability in aligning vision and language representations across a broad spectrum of scenarios.

- **Stage2: Visual Instruction Tuning**: At this stage, we expose the model to various visual tasks, enabling it to answer downstream vision-related questions effectively.

**Training Data**

- [10M FUSION Alignment Data](https://huggingface.co/datasets/starriver030515/FUSION-Pretrain-10M) For Stage1

- [12M FUSION Curated Instruction Tuning Data](https://huggingface.co/datasets/starriver030515/FUSION-Finetune-12M) For Stage1.5 and Stage2

## Performance

**Where to send questions or comments about the model:**

https://github.com/starriver030515/FUSION/issues

## Paper or resources for more information

- [https://arxiv.org/abs/2504.09925](https://arxiv.org/abs/2504.09925)

- [https://github.com/starriver030515/FUSION](https://github.com/starriver030515/FUSION)

## Citation

If you find FUSION useful for your research and applications, please cite using this BibTeX:

```bibtex

@misc{liu2025fusionfullyintegrationvisionlanguage,

title={FUSION: Fully Integration of Vision-Language Representations for Deep Cross-Modal Understanding},

author={Zheng Liu and Mengjie Liu and Jingzhou Chen and Jingwei Xu and Bin Cui and Conghui He and Wentao Zhang},

year={2025},

eprint={2504.09925},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2504.09925},

}

```

**Where to send questions or comments about the model:**

https://github.com/starriver030515/FUSION/issues

## Paper or resources for more information

- [https://arxiv.org/abs/2504.09925](https://arxiv.org/abs/2504.09925)

- [https://github.com/starriver030515/FUSION](https://github.com/starriver030515/FUSION)

## Citation

If you find FUSION useful for your research and applications, please cite using this BibTeX:

```bibtex

@misc{liu2025fusionfullyintegrationvisionlanguage,

title={FUSION: Fully Integration of Vision-Language Representations for Deep Cross-Modal Understanding},

author={Zheng Liu and Mengjie Liu and Jingzhou Chen and Jingwei Xu and Bin Cui and Conghui He and Wentao Zhang},

year={2025},

eprint={2504.09925},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2504.09925},

}

```

FUSION is a family of multimodal large language models that adopts a fully integrated vision-language architecture, enabling comprehensive and fine-grained cross-modal understanding. In contrast to prior approaches that primarily perform shallow or late-stage modality fusion during the LLM decoding phase, FUSION achieves deep, dynamic integration across the entire vision-language processing pipeline.

To enable this, FUSION utilizes Text-Guided Unified Vision Encoding, which incorporates textual context directly into the vision encoder. This design allows for pixel-level vision-language alignment and facilitates early-stage cross-modal interaction.

During decoding, FUSION employs Context-Aware Recursive Alignment Decoding strategy. This component dynamically aggregates and refines visual features based on the evolving textual context at each decoding step, allowing the model to capture question-level semantics with high precision.

To further enhance alignment and reduce the semantic gap between modalities, FUSION integrates Dual-Supervised Semantic Mapping Loss, which provides simultaneous supervision in both visual and textual embedding spaces. This dual-path guidance strengthens the consistency and semantic coherence of the fused representations.

**Base Model**

**LLM**: [meta-llama/Llama-3.1-8B-Instruct](https://huggingface.co/meta-llama/Llama-3.1-8B-Instruct)

**Vision Encoder**: [google/siglip-so400m-patch14-384](https://huggingface.co/google/siglip-so400m-patch14-384)

## Training Details

**Training Strategies**

FUSION is trained with a three-stage training framework, ensuring comprehensive alignment and integration between visual and linguistic modalities.

- **Stage1: Foundational Semantic Alignment**: We pretrain the vision encoder using extensive image-caption datasets to establish precise semantic alignment be- tween visual and textual representations.

- **Stage1.5: Contextual Multimodal Fusion**: In contrast to Stage 1, this intermediate stage incorporates various types of QA data along with image-caption pairs. This phase is designed to enhance the model’s adaptability in aligning vision and language representations across a broad spectrum of scenarios.

- **Stage2: Visual Instruction Tuning**: At this stage, we expose the model to various visual tasks, enabling it to answer downstream vision-related questions effectively.

**Training Data**

- [10M FUSION Alignment Data](https://huggingface.co/datasets/starriver030515/FUSION-Pretrain-10M) For Stage1

- [12M FUSION Curated Instruction Tuning Data](https://huggingface.co/datasets/starriver030515/FUSION-Finetune-12M) For Stage1.5 and Stage2

## Performance

FUSION is a family of multimodal large language models that adopts a fully integrated vision-language architecture, enabling comprehensive and fine-grained cross-modal understanding. In contrast to prior approaches that primarily perform shallow or late-stage modality fusion during the LLM decoding phase, FUSION achieves deep, dynamic integration across the entire vision-language processing pipeline.

To enable this, FUSION utilizes Text-Guided Unified Vision Encoding, which incorporates textual context directly into the vision encoder. This design allows for pixel-level vision-language alignment and facilitates early-stage cross-modal interaction.

During decoding, FUSION employs Context-Aware Recursive Alignment Decoding strategy. This component dynamically aggregates and refines visual features based on the evolving textual context at each decoding step, allowing the model to capture question-level semantics with high precision.

To further enhance alignment and reduce the semantic gap between modalities, FUSION integrates Dual-Supervised Semantic Mapping Loss, which provides simultaneous supervision in both visual and textual embedding spaces. This dual-path guidance strengthens the consistency and semantic coherence of the fused representations.

**Base Model**

**LLM**: [meta-llama/Llama-3.1-8B-Instruct](https://huggingface.co/meta-llama/Llama-3.1-8B-Instruct)

**Vision Encoder**: [google/siglip-so400m-patch14-384](https://huggingface.co/google/siglip-so400m-patch14-384)

## Training Details

**Training Strategies**

FUSION is trained with a three-stage training framework, ensuring comprehensive alignment and integration between visual and linguistic modalities.

- **Stage1: Foundational Semantic Alignment**: We pretrain the vision encoder using extensive image-caption datasets to establish precise semantic alignment be- tween visual and textual representations.

- **Stage1.5: Contextual Multimodal Fusion**: In contrast to Stage 1, this intermediate stage incorporates various types of QA data along with image-caption pairs. This phase is designed to enhance the model’s adaptability in aligning vision and language representations across a broad spectrum of scenarios.

- **Stage2: Visual Instruction Tuning**: At this stage, we expose the model to various visual tasks, enabling it to answer downstream vision-related questions effectively.

**Training Data**

- [10M FUSION Alignment Data](https://huggingface.co/datasets/starriver030515/FUSION-Pretrain-10M) For Stage1

- [12M FUSION Curated Instruction Tuning Data](https://huggingface.co/datasets/starriver030515/FUSION-Finetune-12M) For Stage1.5 and Stage2

## Performance

**Where to send questions or comments about the model:**

https://github.com/starriver030515/FUSION/issues

## Paper or resources for more information

- [https://arxiv.org/abs/2504.09925](https://arxiv.org/abs/2504.09925)

- [https://github.com/starriver030515/FUSION](https://github.com/starriver030515/FUSION)

## Citation

If you find FUSION useful for your research and applications, please cite using this BibTeX:

```bibtex

@misc{liu2025fusionfullyintegrationvisionlanguage,

title={FUSION: Fully Integration of Vision-Language Representations for Deep Cross-Modal Understanding},

author={Zheng Liu and Mengjie Liu and Jingzhou Chen and Jingwei Xu and Bin Cui and Conghui He and Wentao Zhang},

year={2025},

eprint={2504.09925},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2504.09925},

}

```

**Where to send questions or comments about the model:**

https://github.com/starriver030515/FUSION/issues

## Paper or resources for more information

- [https://arxiv.org/abs/2504.09925](https://arxiv.org/abs/2504.09925)

- [https://github.com/starriver030515/FUSION](https://github.com/starriver030515/FUSION)

## Citation

If you find FUSION useful for your research and applications, please cite using this BibTeX:

```bibtex

@misc{liu2025fusionfullyintegrationvisionlanguage,

title={FUSION: Fully Integration of Vision-Language Representations for Deep Cross-Modal Understanding},

author={Zheng Liu and Mengjie Liu and Jingzhou Chen and Jingwei Xu and Bin Cui and Conghui He and Wentao Zhang},

year={2025},

eprint={2504.09925},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2504.09925},

}

```