library_name: transformers

tags:

- Omni-modal-LLM

- Multi-modal-LLM

- Emotional-spoken-dialogue

license: apache-2.0

datasets:

- Emova-ollm/emova-alignment-7m

- Emova-ollm/emova-sft-4m

- Emova-ollm/emova-sft-speech-231k

language:

- en

- zh

base_model:

- Emova-ollm/qwen2vit600m

- Emova-ollm/Qwen2.5-72B-Instruct_add_speech_token_4096_nostrip

model-index:

- name: emova-qwen-2-5-72b-hf

results:

- task:

type: multimodal

dataset:

name: AI2D

type: ai2d

metrics:

- type: accuracy

value: 85.8

name: accuracy

verified: true

- task:

type: multimodal

dataset:

name: ChartQA

type: chartqa

metrics:

- type: accuracy

value: 88.7

name: accuracy

verified: true

- task:

type: multimodal

dataset:

name: DocVQA

type: docvqa

metrics:

- type: accuracy

value: 95.9

name: accuracy

verified: true

- task:

type: multimodal

dataset:

name: InfoVQA

type: infovqa

metrics:

- type: accuracy

value: 83.2

name: accuracy

verified: true

- task:

type: multimodal

dataset:

name: MathVerse

type: mathverse

metrics:

- type: accuracy

value: 50

name: accuracy

verified: true

- task:

type: multimodal

dataset:

name: MathVista

type: mathvista

metrics:

- type: accuracy

value: 69.9

name: accuracy

verified: true

- task:

type: multimodal

dataset:

name: MMBench

type: mmbench

metrics:

- type: accuracy

value: 86.4

name: accuracy

verified: true

- task:

type: multimodal

dataset:

name: MME

type: mme

metrics:

- type: score

value: 2402

name: score

verified: true

- task:

type: multimodal

dataset:

name: MMVet

type: mmvet

metrics:

- type: accuracy

value: 64.8

name: accuracy

verified: true

- task:

type: multimodal

dataset:

name: OCRBench

type: ocrbench

metrics:

- type: accuracy

value: 843

name: accuracy

verified: true

- task:

type: multimodal

dataset:

name: RealWorldQA

type: realworldqa

metrics:

- type: accuracy

value: 71

name: accuracy

verified: true

- task:

type: multimodal

dataset:

name: Seed-Bench-Image

type: seed-bench-image

metrics:

- type: accuracy

value: 76.6

name: accuracy

verified: true

- task:

type: multimodal

dataset:

name: Science-QA

type: science-qa

metrics:

- type: accuracy

value: 98.2

name: accuracy

verified: true

- task:

type: multimodal

dataset:

name: TextVQA

type: textvqa

metrics:

- type: accuracy

value: 81.4

name: accuracy

verified: true

- task:

name: Automatic Speech Recognition

type: automatic-speech-recognition

dataset:

name: LibriSpeech (clean)

type: librispeech_asr

config: clean

split: test

args:

language: en

metrics:

- name: Test WER

type: wer

value: 2.9

EMOVA-Qwen-2.5-72B-HF

![]()

🤗 EMOVA-Models | 🤗 EMOVA-Datasets | 🤗 EMOVA-Demo

📄 Paper | 🌐 Project-Page | 💻 Github | 💻 EMOVA-Speech-Tokenizer-Github

Model Summary

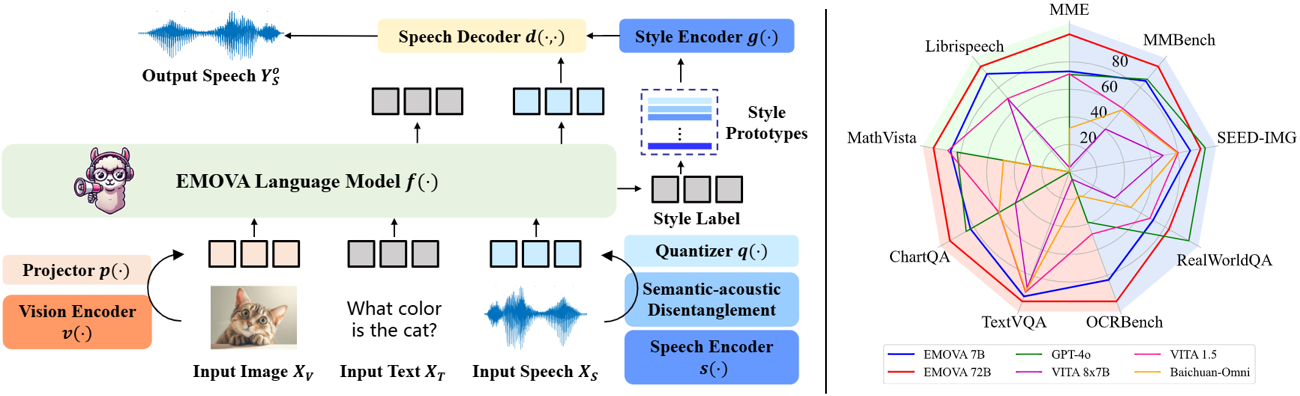

EMOVA (EMotionally Omni-present Voice Assistant) is a novel end-to-end omni-modal LLM that can see, hear and speak without relying on external models. Given the omni-modal (i.e., textual, visual and speech) inputs, EMOVA can generate both textual and speech responses with vivid emotional controls by utilizing the speech decoder together with a style encoder. EMOVA possesses general omni-modal understanding and generation capabilities, featuring its superiority in advanced vision-language understanding, emotional spoken dialogue, and spoken dialogue with structural data understanding. We summarize its key advantages as:

- State-of-the-art omni-modality performance: EMOVA achieves state-of-the-art comparable results on both vision-language and speech benchmarks simultaneously. Our best performing model, EMOVA-72B, even surpasses commercial models including GPT-4o and Gemini Pro 1.5.

- Emotional spoken dialogue: A semantic-acoustic disentangled speech tokenizer and a lightweight style control module are adopted for seamless omni-modal alignment and diverse speech style controllability. EMOVA supports bilingual (Chinese and English) spoken dialogue with 24 speech style controls (i.e., 2 speakers, 3 pitches and 4 emotions).

- Diverse configurations: We open-source 3 configurations, EMOVA-3B/7B/72B, to support omni-modal usage under different computational budgets. Check our Model Zoo and find the best fit model for your computational devices!

Performance

| Benchmarks | EMOVA-3B | EMOVA-7B | EMOVA-72B | GPT-4o | VITA 8x7B | VITA 1.5 | Baichuan-Omni |

|---|---|---|---|---|---|---|---|

| MME | 2175 | 2317 | 2402 | 2310 | 2097 | 2311 | 2187 |

| MMBench | 79.2 | 83.0 | 86.4 | 83.4 | 71.8 | 76.6 | 76.2 |

| SEED-Image | 74.9 | 75.5 | 76.6 | 77.1 | 72.6 | 74.2 | 74.1 |

| MM-Vet | 57.3 | 59.4 | 64.8 | - | 41.6 | 51.1 | 65.4 |

| RealWorldQA | 62.6 | 67.5 | 71.0 | 75.4 | 59.0 | 66.8 | 62.6 |

| TextVQA | 77.2 | 78.0 | 81.4 | - | 71.8 | 74.9 | 74.3 |

| ChartQA | 81.5 | 84.9 | 88.7 | 85.7 | 76.6 | 79.6 | 79.6 |

| DocVQA | 93.5 | 94.2 | 95.9 | 92.8 | - | - | - |

| InfoVQA | 71.2 | 75.1 | 83.2 | - | - | - | - |

| OCRBench | 803 | 814 | 843 | 736 | 678 | 752 | 700 |

| ScienceQA-Img | 92.7 | 96.4 | 98.2 | - | - | - | - |

| AI2D | 78.6 | 81.7 | 85.8 | 84.6 | 73.1 | 79.3 | - |

| MathVista | 62.6 | 65.5 | 69.9 | 63.8 | 44.9 | 66.2 | 51.9 |

| Mathverse | 31.4 | 40.9 | 50.0 | - | - | - | - |

| Librispeech (WER↓) | 5.4 | 4.1 | 2.9 | - | 3.4 | 8.1 | - |

Usage

This repo contains the EMOVA-Qwen2.5-72B checkpoint organized in the HuggingFace format, and thus, can be directly loaded with transformers Auto APIs.

from transformers import AutoModel, AutoProcessor

from PIL import Image

import torch

### Uncomment if you want to use Ascend NPUs

# import torch_npu

# from torch_npu.contrib import transfer_to_npu

# prepare models and processors

model = AutoModel.from_pretrained(

"Emova-ollm/emova-qwen-2-5-72b-hf",

torch_dtype=torch.bfloat16,

attn_implementation='flash_attention_2', # OR 'sdpa' for Ascend NPUs

low_cpu_mem_usage=True,

trust_remote_code=True).eval().cuda()

processor = AutoProcessor.from_pretrained("Emova-ollm/emova-qwen-2-5-72b-hf", trust_remote_code=True)

# only necessary for spoken dialogue

# Note to inference with speech inputs/outputs, **emova_speech_tokenizer** is still a necessary dependency (https://huggingface.co/Emova-ollm/emova_speech_tokenizer_hf#install).

speeck_tokenizer = AutoModel.from_pretrained("Emova-ollm/emova_speech_tokenizer_hf", torch_dtype=torch.float32, trust_remote_code=True).eval().cuda()

processor.set_speech_tokenizer(speeck_tokenizer)

# Example 1: image-text

inputs = dict(

text=[

{"role": "system", "content": [{"type": "text", "text": "You are a helpful assistant."}]},

{"role": "user", "content": [{"type": "image"}, {"type": "text", "text": "What's shown in this image?"}]},

{"role": "assistant", "content": [{"type": "text", "text": "This image shows a red stop sign."}]},

{"role": "user", "content": [{"type": "text", "text": "Describe the image in more details."}]},

],

images=Image.open('path/to/image')

)

# Example 2: text-audio

inputs = dict(

text=[{"role": "system", "content": [{"type": "text", "text": "You are a helpful assistant."}]}],

audios='path/to/audio'

)

# Example 3: image-text-audio

inputs = dict(

text=[{"role": "system", "content": [{"type": "text", "text": "You are a helpful assistant."}]}],

images=Image.open('path/to/image'),

audios='path/to/audio'

)

# run processors

has_speech = 'audios' in inputs.keys()

inputs = processor(**inputs, return_tensors="pt")

inputs = inputs.to(model.device)

# prepare generation arguments

gen_kwargs = {"max_new_tokens": 4096, "do_sample": False} # add if necessary

speech_kwargs = {"speaker": "female", "output_wav_prefix": "output"} if has_speech else {}

# run generation

# for speech outputs, we will return the saved wav paths (c.f., output_wav_prefix)

with torch.no_grad():

outputs = model.generate(**inputs, **gen_kwargs)

outputs = outputs[:, inputs['input_ids'].shape[1]:]

print(processor.batch_decode(outputs, skip_special_tokens=True, **speech_kwargs))

Citation

@article{chen2024emova,

title={Emova: Empowering language models to see, hear and speak with vivid emotions},

author={Chen, Kai and Gou, Yunhao and Huang, Runhui and Liu, Zhili and Tan, Daxin and Xu, Jing and Wang, Chunwei and Zhu, Yi and Zeng, Yihan and Yang, Kuo and others},

journal={arXiv preprint arXiv:2409.18042},

year={2024}

}